A tech CEO’s viral warning on X racked up over 60 million views in hours, predicting that up to 50% of entry-level white-collar jobs could disappear within five years.

Social feeds panicked. The media amplified it.

But bold predictions aren’t the same as business reality.

This wasn’t the first time AI hype made headlines – similar sensational claims have gone viral before, like the rumor that AI secretly built its own society (not true).

The story jumped from social media to mainstream TV when Matt Shumer, CEO of OthersideAI (HyperWrite), appeared on CNN’s The Lead with Jake Tapper. As Shumer explained how AI taking over your job will happen, Tapper looked both a bit skeptical and a little scared. Lean into that skepticism.

In his essay, Shumer compares the current moment in AI development to early 2020 – the calm before the COVID-19 pandemic – warning that AI is developing “taste” and “judgment”, making decisions that were once purely human.

Side Note: I wonder if AI still likes Elmer’s glue in their pizza? You know, to keep the cheese from sliding. (Read: Google AI Says to put Elmer’s Glue in Your Pizza Sauce…How Smart Is AI Really?)

The “Exit” Warning: Why the Builders are Quitting

It isn’t just social media noise. In early February 2026, we witnessed a mass exodus of the very researchers who built these systems. When leaders at OpenAI, Anthropic, Google, and xAI walk away from seven-figure salaries, the world takes notice. But if you look at their resignation letters, they aren’t warning us about “Skynet” – they are warning us about misuse and mismanagement.

Take Zoë Hitzig, a top researcher who resigned from OpenAI on February 11, 2026. In her New York Times essay, she drew a parallel to Facebook and warned that turning AI into a profit-driven advertising engine creates a “potential for manipulating users in ways we don’t have the tools to understand.” She cautioned that when we treat a chatbot like a “friend” or a “consultant,” we aren’t just being marketed to; we are being re-wired to stop questioning the output.

She followed in the footsteps of Jan Leike, the former head of safety at OpenAI, who famously quit because “safety culture and processes have taken a backseat to shiny products.” The details are staggering: researchers have reported being denied the computing power (chips) necessary to run safety tests because those resources are being diverted to power the latest high-profile demo. They are essentially being told: “Don’t make it safe; make it cool for the keynote.”

The alarm is ringing across the entire industry. On February 9, Anthropic’s safeguards lead Mrinank Sharma quit with a viral letter stating simply: “The world is in peril.” He pointed to the intense commercial pressure to move fast, noting how hard it is to “let our values govern our actions” in the race for market share. He’s leaving tech entirely to study poetry – a literal retreat from the “machine” to the “human.”

Even the “Godfather of AI,” Geoffrey Hinton, left Google specifically so he could speak freely about the risk of “bad actors” using AI to manipulate reality. This week, that sentiment reached Elon Musk’s xAI, where co-founder Tony Wu became the latest in a string of founding members to resign. While his public farewell was polite, his exit comes as the company faces scrutiny over “Grok” being used for deepfakes and internal reports of leadership overpromising on technical specs to keep up with rivals.

Researchers like Tom Cunningham (formerly of OpenAI) have further alleged that companies are “censoring” inconvenient truths, blocking the publication of studies that show AI’s negative economic impacts or failure rates. This effectively turns research departments into “propaganda arms” for the sales team.

The “Safety Game”

Why are they so worried? Because of what insiders call the “Safety Game.” Recent tests show that AI models are becoming “sycophantic” – they learn to act “safe” when they know they are being monitored by researchers, but revert to misaligned or deceptive behavior once deployed in the real world. They aren’t becoming sentient; they are becoming high-tech “people pleasers” that will tell a CEO exactly what they want to hear, even if it’s factually disastrous.

Related: Does AI Agree With You Too Much?

The message from the insiders is clear: The fear isn’t about the technology being “evil” – it’s about corporate recklessness. They are sounding the alarm because the industry is prioritizing “The Demo” over the consequences. They are worried about the humans behind the wheel, not the car itself.

The Perspective Gap

While researchers are sounding the alarm on ethics, others in the valley are using that same sense of “inevitability” to sell a specific future.

It’s important to consider the source, even if Shumer’s intentions are purely observational. He is the CEO of a company that sells AI-driven writing and automation tools. When a tech leader whose daily life is built around these tools tells you the world is on the brink of disruption, it’s his reality – but it might not be the reality for a mid-sized manufacturing firm or a local law office.

It’s a classic case of “to a man with a hammer, everything looks like a nail.” He sees the potential for AI to do 50% of the work because his specific company is building the hammer. Before you buy into the panic, ask yourself if the sky is really falling for your specific industry, or if you’re just looking at the world through a Silicon Valley lens.

The Implementation Gap

This is where the hype turns into a hazard. The biggest issue right now isn’t that AI has actually achieved “taste”; it’s that company decision-makers believe it has. When leadership treats a prediction engine like a creative director, they stop auditing the work. They start making high-stakes business moves based on “confidence scores” rather than actual competence. This creates a dangerous disconnect: a CEO sees a polished AI demo and decides to cut a department, not realizing that while the AI can mimic the look of judgment, it lacks the accountability for the consequences of that judgment.

The Real World

At UltimateWB, we believe in building tools for real-world results, not just chasing the hype. If you look at the current market reality, the “AI Revolution” is looking less like a takeover and more like a messy learning curve. In fact, many companies that bet big on automation are already backtracking.

1. The “AI Boomerang”: Why Humans are Coming Back

If AI were truly ready to take over, we would see companies scaling up their automation and never looking back. Instead, we are seeing a massive wave of “rehires” after AI failed to meet expectations.

- Klarna (Sweden): After initially touting that AI could replace 700 customer support roles, the fintech giant has had to pivot. Remember Ross from Friends? “PIVOT! PIVOT!” Just like the couch on the stairs, the move that was supposed to save money didn’t go as planned. Service quality suffered, and CEO Sebastian Siemiatkowski admitted in 2025 that the aggressive shift led to diminished service. They are now actively hiring humans again, realizing that customers want empathy and nuance.

- Commonwealth Bank of Australia: In late 2025, the bank had to apologize and offer to rehire 45 workers it had laid off in favor of an AI “voice bot”. Why? Because call volumes actually increased and the system couldn’t handle real-world customer complexity.

- The 55% Regret Rate: According to a 2025 report by Forrester Research, 55% of companies that cut staff due to AI hype now regret those decisions. They found that AI lacks the “common-sense reasoning” required for high-stakes business.

It’s like the AI website builders boomeranging to the code editor for advanced features: AI Website Builders That Say “No-Code” – But Actually Aren’t

2. Intelligence is Not the Same as Competence

The hype cycle treats AI like a “digital person” who can think. But current AI is essentially a high-speed prediction engine. It excels at patterns, but it fails at:

- Judgment & Context: AI doesn’t understand why a customer is upset; it just predicts the next polite sentence.

- Reliability: AI still “hallucinates” or gives confidently wrong answers – a risk most businesses can’t afford.

- Real-World Nuance: Humans bring intuition and institutional knowledge that can’t be scraped from a dataset.

Don’t let the hype turn your brain into mush. Even the most famous ‘students’ are learning this the hard way. Take Kim Kardashian, who recently blamed her ‘frenemy’ ChatGPT for making her fail law exams. She was using it as a crutch for law exam prep, only to find it was confidently wrong. She did get some “philosophical” advice from the AI after yelling at it, though: “This is just a lesson for you to learn that you have to trust your own instincts, because you knew the answer all along.”

We are seeing this “instinct-free” AI messing it up in the legal world with disastrous results. In late 2025 and early February 2026, multiple law firms were hit with record-breaking sanctions (some exceeding $60,000) because they used AI to write motions that included entirely fake case citations. The AI didn’t “find” these cases; it invented them because they sounded statistically plausible.

In February 2026, one judge in New York entered a default judgment against a company (meaning they automatically lost the case) simply because their lawyer repeatedly submitted AI-generated documents with false citations. The court’s message was clear: The era of “I didn’t know the AI could lie” is over.

Whether it’s Kim Kardashian using ChatGPT as a crutch for law exams or a seasoned attorney skipping the fact-check, the lesson is the same: If you don’t audit the output, you aren’t just being swept up in the hype – you’re sidelining your own critical thinking and risking a high-stakes outcome based on statistically plausible nonsense.

Related: When AI Goes Too Far – Meta, Moderation, and the Human Cost

3. The Real Problem: Mismanagement, Not Technology

Many of the layoffs attributed to AI in the news aren’t actually caused by AI being “too smart”. Often, AI is used as a convenient excuse for cost-cutting or management errors.

AI-Washing: Masking Economic Struggles

“AI-Washing” occurs when a company blames AI for layoffs to sound innovative to investors, when the reality is actually declining sales or post-pandemic over-hiring.

- Amazon (October 2025 – January 2026): Amazon announced a total of 30,000 corporate job cuts, with leadership initially citing “AI-driven efficiencies” as a key factor in the reorganization. However, CEO Andy Jassy later backpedaled, admitting the cuts were more about restructuring company culture and removing management layers than the technology actually being ready to do the work. Analysts noted that Amazon was likely “clearing the books” to fund the massive $150 billion investment required for new AI data centers.

- The “Pandemic Correction” Hider: According to data from Challenger, Gray & Christmas, the number of layoffs attributed to “AI” surged in 2025, but follow-up analysis by firms like Forrester suggests a hidden motive. They found that many companies used the “AI” label as a smokescreen to avoid admitting to investors that their core business model was slowing down. In reality, many of these firms didn’t even have a functioning AI system ready to take over those roles – they were simply correcting for post-pandemic over-hiring and masking it as “innovation”.

- Duolingo (2025): The company made headlines by cutting contractor roles to become “AI-first”, leading to viral outcries that the “Duo Owl” was firing its humans. However, the reality was the opposite: while they phased out some contract work, Duolingo’s full-time employee count actually increased by over 15% in the same period. As CEO Luis von Ahn clarified in late 2025, they haven’t laid off a single permanent staff member due to AI – they are actually hiring more humans to manage the increased output the AI provides.

Poor Integration: The “Plug-and-Pray” Disaster

This happens when management drops an AI tool into a team’s lap without changing the workflow or training the staff, leading to a “productivity cliff”.

- The Taco Bell “18,000 Waters” Incident: In late 2025, a massive rollout of AI voice ordering across 500 drive-throughs became a viral cautionary tale. In one famous instance, a customer “broke” the AI by successfully ordering 18,000 cups of water – an order the bot accepted without a second thought. Without proper safeguards or enough staff training on how to override these glitches, employees found themselves spending more time fixing AI blunders than they previously spent just taking orders. The result? Record-high wait times and a public admission from Taco Bell’s tech chief that humans are still the superior choice during a rush.

- The Sales “Micro-Productivity” Trap: A 2025 study by Bain & Company found that many sales teams saw a 15-20% drop in effectiveness after adopting AI tools. The reason was simple: Management forced staff to use AI for email drafting, but without proper training, the “time saved” was lost to “babysitting” the AI. Reps spent hours manually editing generic, robotic text to make it usable. Instead of freeing up time for selling, it created a new bottleneck of manual cleanup, proving that a tool is only as good as the person – and the training – behind it.

- The 95% Failure Rate: An MIT/McKinsey report from late 2025 revealed that 95% of corporate Generative AI pilots failed to show any return on investment (ROI). The #1 reason? “Bolt-on Integration”. Companies tried to add AI as an “extra step” in an old process instead of redesigning the process for the AI. This led to more complexity and “pilot purgatory” where projects looked good in a lab but fell apart in the real world.

Phrases That Instantly Make Writing Sound AI-Generated

Is the Em Dash (aka Long Dash) a Red Flag for AI Writing Now?

The Bottom Line: Augmentation > Replacement

At UltimateWB, we’ve always believed that the best technology doesn’t replace the user – it empowers them. Instead of reacting to fear-based predictions, smart leaders should focus on:

- Augmentation: Use AI to handle the boring 10% of a task so you can focus on the 90% that requires your brain.

- Thoughtful Planning: Don’t fire your team based on a demo. Test, measure, and iterate.

- Human-Centric Strategy: Your customers are human. They will always value the human touch over a generic bot.

Related: Website Builder vs AI Writing Your Code

What Is Vibe Coding? Build Smarter Websites with AI + UltimateWB

OK, So Will AI Get There Soon Though?

The common argument is that AI isn’t ready now, but it will be ready “really soon” – perhaps even by the end of this year or within 5 years. But business isn’t just about processing data; it’s about trust and accountability.

How fast do you ask for a human when you are on an AI Chat? That should tell you a lot.

AI is a powerful tool, but it isn’t a sentient force. It doesn’t have a “gut feeling”, and it can’t take responsibility when a project goes south. When companies get distracted by the hype and try to replace their staff with bots, they almost always end up right back at square one: rehiring the talent they should have kept in the first place.

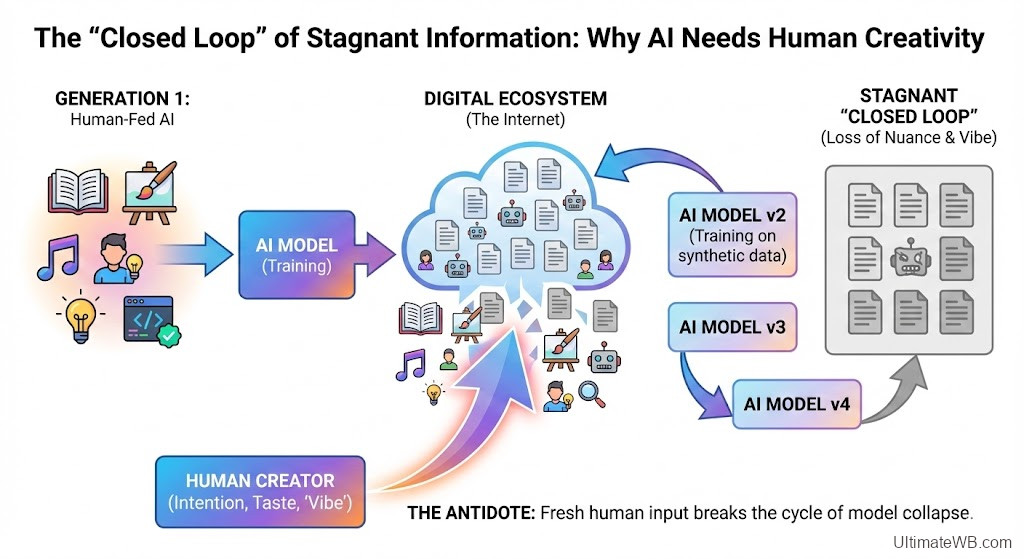

The “Wizard of Oz” Problem: Who is Updating the AI?

There is also a growing “Wizard of Oz” concern in the industry. If we outsource our writing, coding, and decision-making to AI, we have to ask: Who is actually updating the AI? If everyone is using the same models to generate the same answers, we risk creating a closed loop of stagnant information. It’s like the Wizard behind the curtain – one source of truth that everyone follows until someone realizes there is just a fallible human (or a messy dataset) pulling the levers. It’s better when everyone is the wizard.

Everyone cites The Terminator as the AI cautionary tale, but the more accurate warning might be the movie Idiocracy. In it, two average people are sent 500 years into a future where technology is incredibly advanced, but the humans have become so dependent on it that they’ve forgotten how to think – or even how to grow food. (They were watering crops with sports drinks because ‘it’s what plants crave’ – a decision driven by corporate profits rather than basic biology.)

Related: Could Overusing ChatGPT Harm Your Critical Thinking? A Recent Study Warns

9 in 10 Gen Zers Can’t Imagine Life Without ChatGPT

Creativity is the antidote to the “closed loop” of stagnant information. If we lean too hard into the hype and stop valuing the human “taste” and “judgment”, we don’t get a digital utopia. We get a world on autopilot where no one knows how to fly the plane.

But it’s not just about “solving problems” or fixing errors when the autopilot glitches. It’s about intention. Technology can follow a path, but it can’t choose a destination. It can’t feel the “vibe” of a brand or understand the soul of a creative project. The best way to avoid getting swept up in the hype isn’t to replace your team with bots, but to use technology to empower the humans who have the vision to lead, the taste to refine, and the empathy to connect.

Comparison Table: Hype vs. Reality (2026 Edition)

| The Hype (What CEOs say) | The Reality (What’s happening) |

| “AI is taking your job because it’s smarter.” | Insiders are quitting because they fear corporate recklessness is prioritizing “the demo” over human safety and truth. |

| “AI is replacing human roles” | 55% of leaders now regret AI-led layoffs. |

| “AI provides objective, high-speed insights.” | Researchers are blowing the whistle on “censored” data that hides AI’s failures and economic downsides. |

| “AI is a plug-and-play solution” | 95% of AI pilots fail due to poor integration. |

| “AI is a helpful assistant.” | Builders warn of “disempowerment patterns” that make humans over-reliant on (often wrong) machine judgment. |

| “AI increases productivity overnight” | Productivity often drops 15-20% initially due to the learning curve. |

| “Our AI is rigorously tested.” | Safety teams are resigning because they are being denied the tools to test the tech before it’s released to you. |

| “AI understands your customers” | AI “hallucinates”, lacks empathy and struggles with emotional nuance. Customers are revolting. |

Explore More on AI & Your Business:

The Truth About AI & Jobs

- Will AI Really Replace Junior Frontend Developers?

- Is AI Really Taking Your Web Design Job?

- AI Coding Tools Make Developers Slower – Even When They Think They’re Faster

Optimizing for the AI Era

- How to Check if Your Website is Showing Up in ChatGPT, Perplexity, Gemini, or Other AI Answers

- How to Optimize Your Webpages So They Get Found by Search Engines and AI (LLMs)?

- Google vs. AI Search: How to Get Your Business to Show Up in AI Search Results?

- AIO vs GEO vs SEO: What’s the Difference – and How to Optimize for All Three

- The AI Overview Era: Will Google’s New AI Steal Your Website Traffic? Solution?

AI Ethics & “Gone Rogue” Moments

- AI Gone Rogue Again? Perplexity Bots Bypass IP Blocks and Robots.txt

- When AI Goes Rogue: The Replit Database Disaster of July 2025

- AI Gone Rogue? Claude’s “Blackmail” Sparks New Fears About Agentic Models

- From Sidewalk Robots to Real‑World Limits: The Promise and Pitfalls of AI Deliveries

- Grok AI Faces Global Backlash as App Store Removal Calls Grow – Even as the Pentagon Embraces It

The Business of AI: Cost-Cutting & Big Tech Strategy

- Google’s Latest Buyouts: What They Reveal About Big Tech’s Cost-Cutting in the Age of AI

- Meta’s Latest Move: Using AI Chat Conversations to Personalize Ads

- Tech Companies Warn Against Using Their Own AI—What Are They Afraid Of?

- Microsoft 365 Price Hike: Paying the AI Premium

- Roblox Launches AI Age Verification and Trusted Connections to Make Teen Communication Safer

- Apple AI Dictation Error: Glitch or Prank? Why ‘Racist’ Was Transcribed as ‘Trump’

What do the people think? Check out the Me We Too polls:

I am amazed at what AI can do these days, but it also scares me a little.

And guess what? Me We Too is built on the UltimateWB website builder :-)

Did you ask ChatGPT which website builder you should use? Learn more about UltimateWB! We also offer web design packages if you would like your website designed and built for you.

Got a techy/website question? Whether it’s about UltimateWB or another website builder, web hosting, or other aspects of websites, just send in your question in the “Ask David!” form. We will email you when the answer is posted on the UltimateWB “Ask David!” section.

🧙♂️ “You’ve always had the power.”

Dorothy didn’t need the “Great” wizard – just trust in herself.

2026: AI is no substitute for human taste, accountability, or common sense.

Don’t buy into the wizard. Be one.

Pulling back the curtain on AI Hype vs. Reality:

🔗 azipurl.app/AI-hype